GrabAds Platform

Designing a 0-to-1 reporting platform for one of Southeast Asia's largest super-apps

Industry

AdTech / Enterprise SaaS

Company

Grab

Role

Senior Product Designer

Year

2021-2022

Some screens have been altered or obscured to respect client confidentiality.

In 2021, generating a single performance report for a GrabAds campaign was a days-long process. The ops team would pull data from multiple tools, assemble it manually, and send it out as a PDF. GrabAds was already a meaningful revenue stream, and the ops overhead required to run it was the ceiling on how far it could grow.

I led the reporting experience within a larger 0-to-1 build: a unified ads management platform bringing campaign creation, targeting, and reporting into one product. The Ads platform was envisioned as part of Grab's broader merchant portal, a suite of tools helping business owners manage their stores, orders, and advertising in one place. I worked as part of the Obvious team alongside another product designer, with a separate sub-team handling campaign management and targeting.

The problem with reporting

Grab's ads ops team was working across Excel, Tableau, and other internal tools to track campaign performance. There was no unified view. Reports were assembled manually and delivered as PDFs, a process that introduced delays, version confusion, and significant coordination overhead.

The immediate users were Grab's internal ops team, though the platform was being designed with a longer horizon in mind: eventually, external advertisers would run campaigns themselves.

I came into this project with no prior AdTech experience. The domain complexity turned out to be less of a barrier than expected. At the product level, the problem was familiar: a workflow that had grown organically across too many tools, with no designed experience holding it together.

Key decisions

Establishing hierarchy in a data-heavy surface

The reporting dashboard was a 0-to-1 design problem. There was no existing product to work from, just a set of ops workflows that had never been translated into a UI.

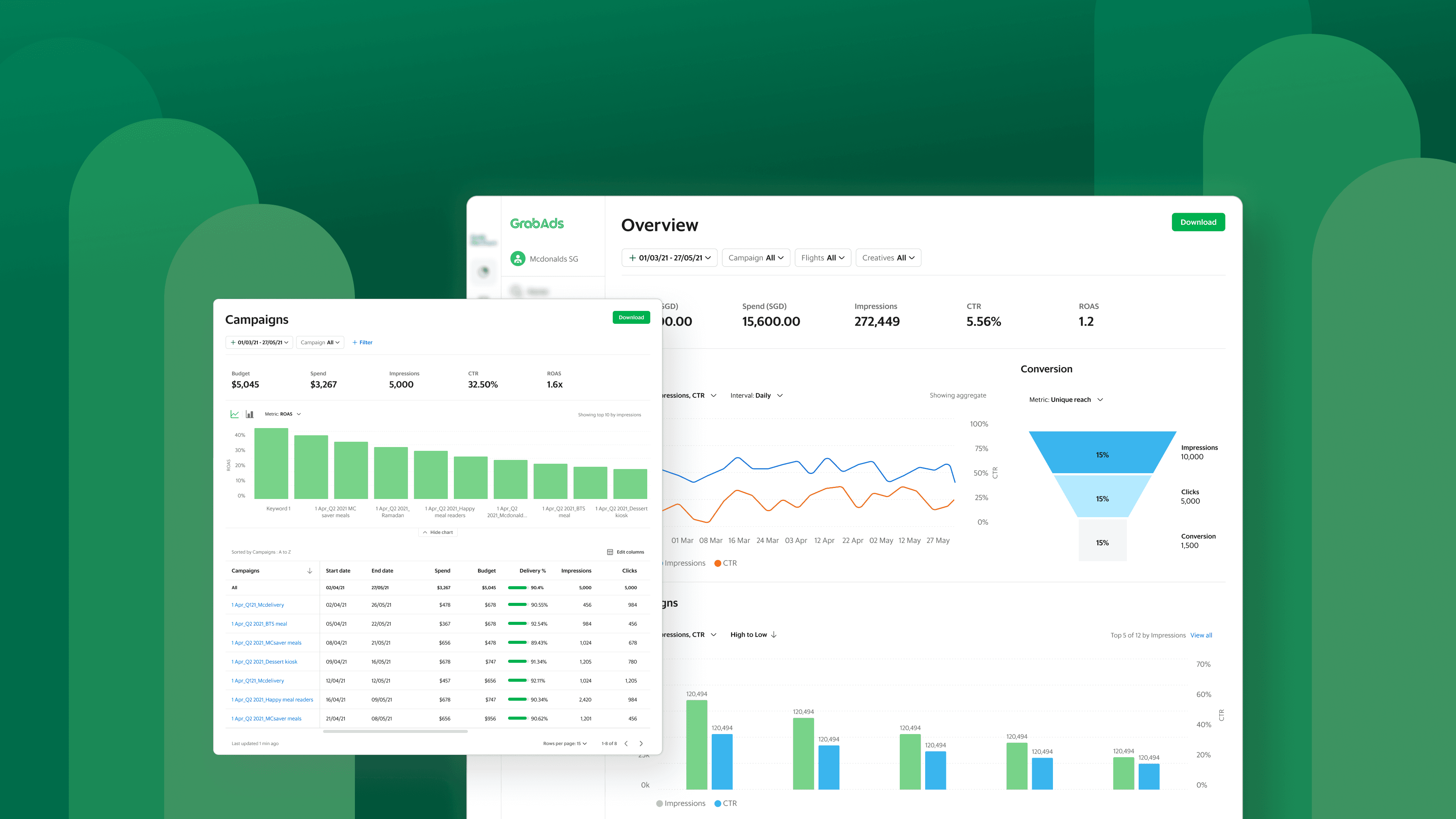

The Overview dashboard surfaces key metrics, trend data, and performance breakdowns across campaigns, flights, creatives, keywords, geo, and time — all in one view.

The core tension was between completeness and clarity. GrabAds tracked a large number of campaign metrics, and the ops team needed access to most of them. Surfacing everything at equal weight would produce a dashboard that required training to read, which a future self-serve product couldn't afford.

The approach was a clear information hierarchy: primary metrics (reach, spend, conversion) always visible at the top level, with secondary metrics accessible through a customisable view. Power users could get to the full data set without it overwhelming the default experience.

This hierarchy came out of interviews with the ops team early in the project. Understanding which metrics they actually made decisions from, versus which they documented for compliance or clients, was what made the prioritisation possible.

Building for two audiences at once

Grab's internal ops team and future external advertisers cared about different things. The ops team needed granular data for internal reporting and client delivery. An advertiser using the platform independently would want a cleaner, more interpretive view of their campaign's performance.

Advertisers can select and reorder dimensions and metrics to customise what appears in their report table, tailoring the view to what matters most for their campaign type.

Early interviews with enterprise advertisers added a layer of nuance to this. Even self-serve advertisers wouldn't rely solely on GrabAds reporting. They were running campaigns across multiple platforms (Meta, Alibaba, Lazada) and doing their own consolidated analytics externally. The platform wasn't going to replace that workflow; it needed to fit within it. This shaped the decision to build a Download feature (PDF, Excel, CSV), so advertisers could pull GrabAds data into their own reporting stack without friction.

Rather than designing for one audience and retrofitting later, we built a flexible report builder that let users select the metrics they wanted to see and configure their view. This kept the underlying product the same for both audiences while letting the experience adapt to their needs. Reports became a configurable, on-demand output rather than a static PDF assembled by hand.

Filtering at two levels

Filtering was built in two phases. Primary filters let users scope reports by campaign, flight, or creative, covering the baseline need for any reporting surface. Advanced filters came next: a conditional logic builder that let ops teams slice high-volume data by metric, rule, and value.

A conditional logic filter builder lets ops teams slice high-volume data by metric, rule, and value — going beyond basic scoping to support deeper performance analysis.

The distinction mattered because the two modes served different jobs. Primary filters were about navigation and focus. Advanced filters were about analysis: finding underperforming segments, comparing spend against delivery, fine-tuning campaigns without leaving the platform. Both contributed to the same goal: reducing reliance on manual exports and external tools, and moving the platform closer to genuinely self-serve.

One platform, not two features

The ads platform was built across two sub-teams, reporting on one side, campaign management on the other. The risk with parallel workstreams is a product that feels disjointed at the joins.

From any report table, users can view campaign details or jump directly into editing — keeping the workflow within the platform rather than switching between tools.

The mitigation was to treat the connections between surfaces as a first-class design concern from the start. Every report table links directly to the relevant campaign, flight, or creative. An ops team member looking at performance data could act on what they saw immediately, without losing context or navigating back to a home screen.

This required close coordination on information architecture, shared navigation patterns, and consistent labelling across both surfaces. The goal was a coherent, unified experience regardless of which part of the platform you were in.

Validation and iteration

Design was tested with Grab's ads ops team through prototype sessions. The overall response to the reporting UI was positive, but the sessions surfaced a few specific issues worth acting on.

Discoverability was the main friction point. Features like filters and column editing weren't prominent enough in the initial design. Users either missed them or took longer than expected to locate them. Both were made more visible in a subsequent update.

The sessions also produced useful input on the summary cards at the top of each report. The default metrics shown mattered more than expected, and they weren't universal. Ops teams working on food campaigns prioritised different metrics than those working on non-food. The summary cards were updated to reflect campaign type, surfacing the most relevant metrics by default rather than a one-size-fits-all set.

One other pattern emerged around navigation: users moved naturally through report levels in a consistent sequence, from campaigns down to flights and creatives. This confirmed the hierarchy the reporting structure was built around and informed how navigation between report types was refined in later iterations.

Outcome

The platform launched internally to Grab's ads ops team. Extending it to external advertisers was the planned next phase, and the report builder architecture was designed to support that without a structural rebuild.

Campaigns report and Overview dashboard

Days to Hours

Report generation moved from a multi-day manual process to on-demand, within the platform

3 tools to 1

Excel, Tableau, and internal exports unified into a single ads management platform

Reflection

Coming into an unfamiliar domain with a tight delivery timeline pushed me to learn quickly and prioritise ruthlessly. The ops team interviews were the most valuable investment of the project. Without a clear picture of how they actually worked, the dashboard would have been shaped by assumptions about what an analytics product should look like rather than what this team needed.

One thing research with enterprise advertisers made clear early on: self-serve didn't mean standalone. These advertisers were running campaigns across multiple platforms and consolidating their own analytics externally. Designing for that reality, rather than assuming GrabAds reporting would replace their existing workflow, was one of the more grounding insights of the project. The Download feature was a direct outcome of that understanding.

Looking back, I'd have pushed harder on the visual design. Because this was a 0-to-1 product and we were aligning broadly with the merchant platform's visual language, the safe path was to prioritise functionality and consistency over exploration. That was the right call for the timeline, but there was likely more room to find a more considered, visually interesting direction than we took.

The broader lesson: on data-heavy products, structure is the design. Getting the information hierarchy right mattered more than any visual decision that followed.